You've had to imagine all this because we almost always do only one experiment or take only one sample, so we never observe the sampling distribution. A sampling distribution is abstract, it describes variability from sample to sample, not across a sample. However, multiple samples may not always be available to the statistician.

/CentralLimitTheoremCLT-687bdb7ec28f44539d5eabc54070058c.jpg)

We call the standard deviation of the sampl-ing distribution the “standard error” to distinguish it from the standard deviation of the sample distribution. You might find it helpful to remember this by interpreting the word “error” in standard error as reflecting sampling “error”.

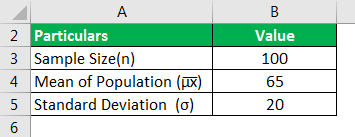

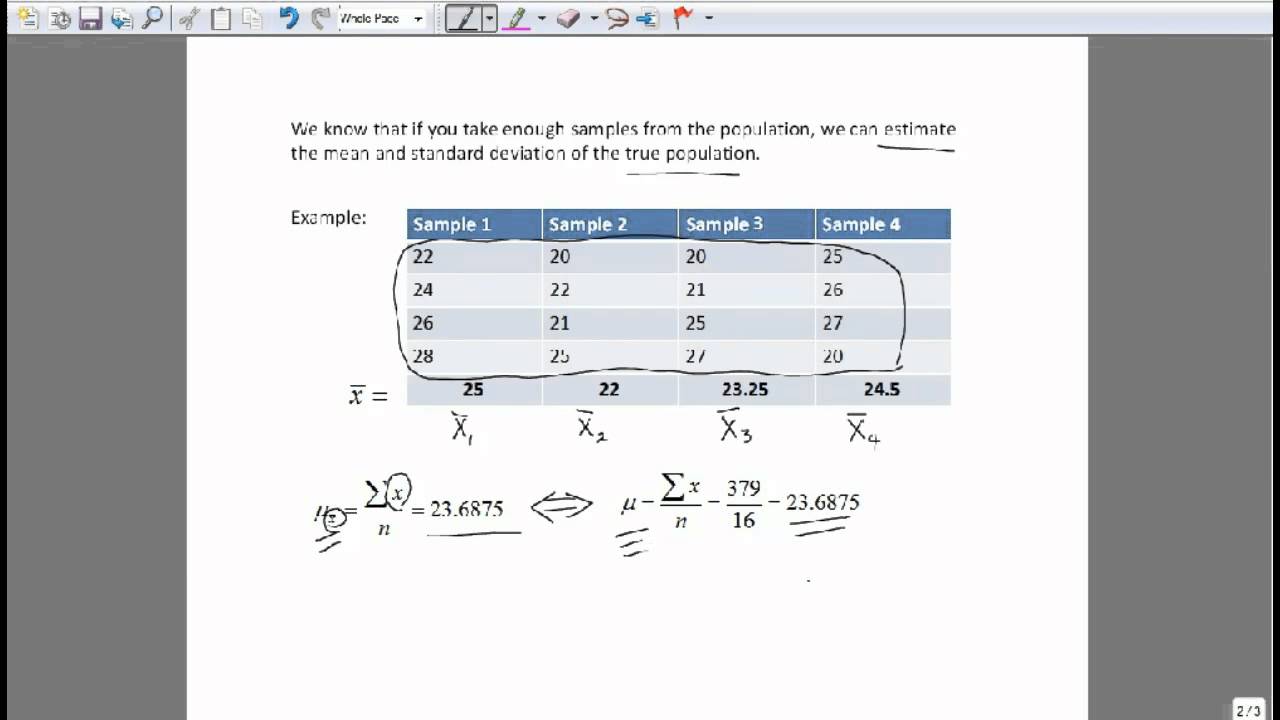

This would mean more precise point estimates. You might imagine that means calculated from bigger samples would vary less from sample to sample, and likewise, that means calculated from samples taken from populations with less variation, would vary less from sample to sample. You can also see it as a measure of precision of the point estimate, in this case the mean. This will allow us to compare the various statistics we calculate and their sampling distributions to their true values. To illustrate the concept of sampling distributions, we’ll simulate drawing samples for an underlying population that we’re trying to estimate statistics about. It would thus be a measure of the amount of uncertainty in your estimate of the population mean or “sampling variation” or “sampling error”. A.2 Simulating sampling from an underlying population. The spread or standard deviation of this sampling distribution would capture the sample-to-sample variability of your estimate of the population mean. This is different to the “sample” distribution which is the distribution of the observed data. We call it sampl -ing because it is the distribution from “sampl- ing” lots of times. We call this distribution the sampling distribution. The sample means would vary from sample to sample and you could plot their distribution with a histogram. Imagine you keep doing this over and over again, each time calculating a mean and recording its value. Imagine you take another independent random sample and calculate another mean, it is highly likely it would be different to the first mean because it is a different sample - the sample was selected completely independently of the first sample, and individuals were selected by a random process. You calculate the mean in the sample because what you really want to know is the mean in the population, and the sample mean is a point estimate of this population parameter.

Imagine you take a random sample of individuals from a target population, measure something and then calculate a sample statistic, the “mean” let’s say. –taken from comments by John W.A thought experiment about sampling distributions: The spread or standard deviation of this sampling distribution would capture the sample-to-sample variability of your estimate of the population mean. The standard error turns out to be an extremely important statistic, because it is used both to construct confidence intervals around estimates of population means (the confidence interval is the standard error times the critical value of t) and in significance testing. How good this estimate is depends on the shape of the original distribution of sampling units (the closer to normal the better) and on the sample size (the larger the sample the better). This estimate is derived by dividing the standard deviation by the square root of the sample size. In lieu of taking many samples one can estimate the standard error from a single sample. The standard deviation of this set of mean values is the standard error. We calculate the mean of each of these samples and now have a sample (usually called a sampling distribution) of means. A lot of data drawn and used by academicians, statisticians, researchers, marketers, analysts, etc. Let’s say that instead of taking just one sample of 10 plant heights from a population of plant heights we take 100 separate samples of 10 plant heights. The standard error, on the other hand, is a measure of the variability of a set of means. According to Wikipedia, the standard error of a statistic is the standard deviation of its sampling distribution or an estimate of that standard deviation. The 5 cm can be thought of as a measure of the average of each individual plant height from the mean of the plant heights. It is especially useful in the field of econometrics. It can be applied in statistics and economics. We can say that our sample has a mean height of 10 cm and a standard deviation of 5 cm. In other words, it measures how precisely a sampling distribution represents a population. Let’s say we have a sample of 10 plant heights. The standard deviation is a measure of the variability of a single sample of observations. What is the difference between STANDARD DEVIATION and STANDARD ERROR?